The diff lands in the queue at 1,200 lines. The reviewer opens it, scrolls to the bottom, leaves a comment about a variable name, and clicks approve. Nobody reads it all the way through. Nobody has time. The PR merges. Two sprints later something breaks and nobody can trace it back to where it came from. This is not a story about one team. This is the pattern I keep seeing everywhere AI code generation has been handed to engineers without anyone defining what a reviewable pull request looks like.

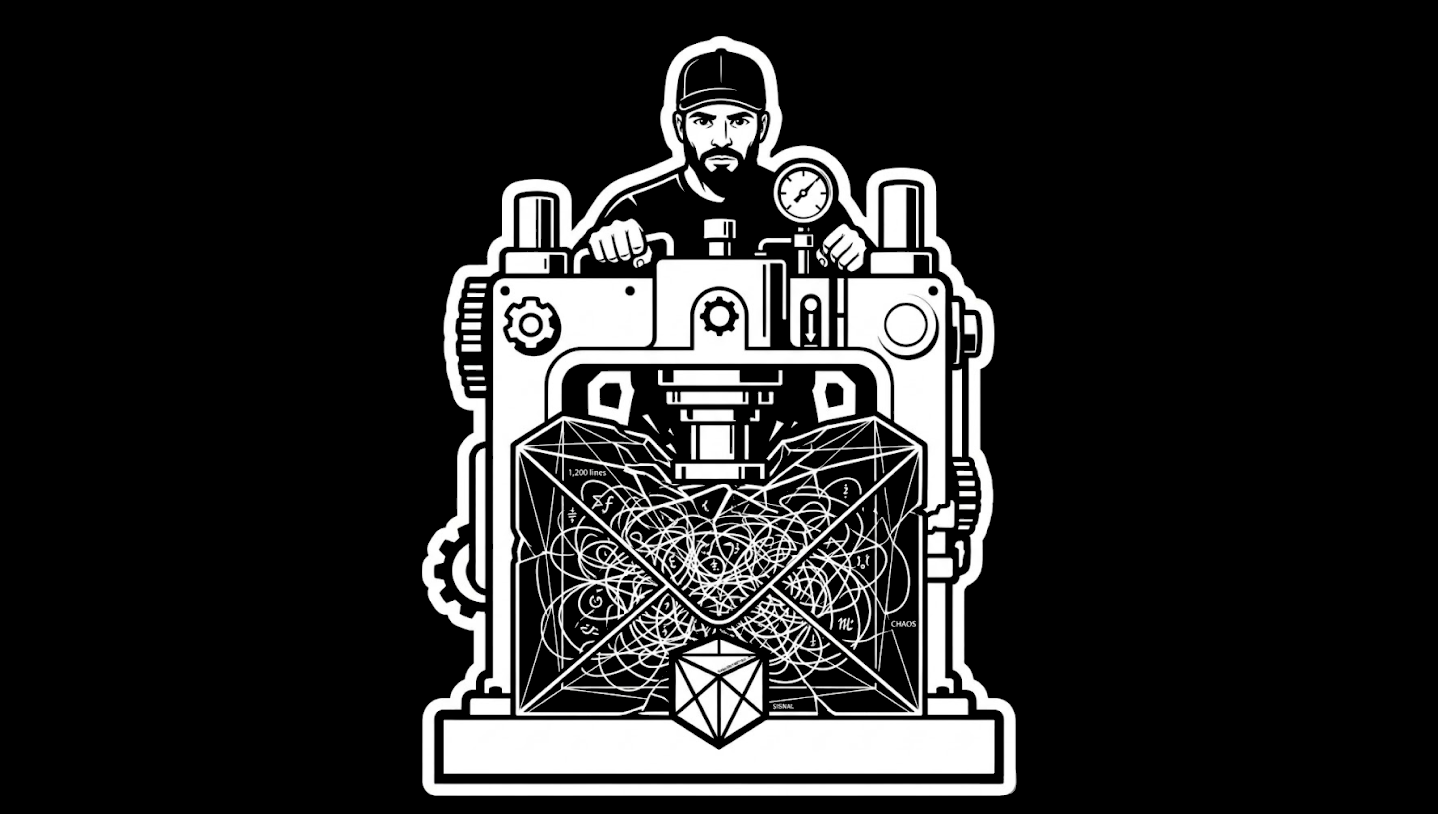

The model fills the envelope until leadership shrinks the envelope. And the reason most teams haven't shrunk it is that nobody has written down what "one pull request" is actually supposed to carry.

When the Standard Is Implicit, the Standard Is Whatever Is Convenient

Most engineering teams have a vague sense that PRs should be reasonably sized. Reviewers know when a diff is too big. Engineers know when they're shipping too much at once. But knowing something and writing it down are different things. What isn't written down becomes whatever is convenient under delivery pressure.

I say pattern because I've watched it from the outside and I've lived it from the inside. Before we built our merge policy, my own team had the same implicit standard everyone has ... PRs should be reasonably sized. Nobody had written down what reasonably sized meant. AI assisted output volume climbed and we kept reviewing the way we always had, which meant we weren't really reviewing at all. We were clearing the queue.

I've watched the same thing happen across teams at different scales. AI code generation shows up. Output volume triples. PR sizes climb. Review times get longer. And somewhere in that climb, the cultural norm shifts without anyone deciding it should. The rubber stamp becomes the default not because reviewers stopped caring but because they're rational actors inside a system that never told them what a reviewable PR actually is.

If you have ten engineers generating AI assisted code and each of them is merging three times what they used to, your review queue has become something nobody designed for. And if you haven't updated your definition of "good" to match the new volume, you're running a 2022 process on a 2026 codebase.

Your review queue has become something nobody designed for.

Shallow and Vertical

On my team, we fixed this with what we call shallow vertical pull request standards.

Shallow means not too many lines. A PR that can be read, understood, and reviewed in a single sitting. Not a tour of the entire codebase. Not a week's worth of changes packaged together because breaking them apart felt like extra work. Shallow means the reviewer can hold the whole thing in their head at once.

Vertical means one concern, all the way through. Not horizontal across three features and two services. Not "I was in this area anyway so I also fixed this other thing." One behavior, one slice, from top to bottom. The PR does one thing and does it completely.

Together those two constraints eliminate most of the PRs that become rubber stamps. A shallow vertical PR is reviewable by definition. It has a clear scope, a readable size, and a single purpose the reviewer can actually evaluate. If a PR fails either test, it's not ready for review yet. It's not a PR yet. It's a work in progress that needs to be broken up.

We set our line limit based on DORA metrics data. Not a gut feel. Not what sounded right in a meeting. We looked at our lead time for changes at different PR sizes and found the inflection point where review quality and cycle time both degraded. The data made the conversation easy. It wasn't me telling engineers their PRs were too big. It was the data showing what PR size correlated with slower delivery and more rework. That number became the ceiling. Any PR above it gets closed automatically.

Not flagged. Not sent back with a polite comment. Closed.

The Automation Is the Policy

That last part is what most teams skip. They write the standard in a wiki page, hold a team meeting about it, and move on. The standard lives there for three months until delivery pressure builds and the first exception gets made and then the exception becomes the new standard, which is just the old implicit standard with a document attached to it.

We automated enforcement because we knew ourselves. The uncomfortable conversation about a PR being too big is one most engineers don't want to have and most reviewers will avoid if they can find a reason to. Automation removes the conversation. The system closes the PR. The engineer breaks it up. The culture doesn't depend on someone's willingness to hold the line in the moment.

The culture doesn't depend on someone's willingness to hold the line in the moment.

This is the part that actually changed the behavior. Not the document. The automation made the policy real. A standard that only exists when someone is brave enough to enforce it is not a standard. It's a suggestion with a header.

What "Good" Means on the Page

Writing down shallow vertical standards is step one. Anchoring the line limit to DORA data is step two. Automating enforcement is step three. But there's a fourth piece that doesn't get enough attention.

The standard also has to define what the reviewer is responsible for. Not just the size of the PR but the quality of the review. What does "approved" mean? Did you read it? Did you understand the scope? Did you check that it does one thing and doesn't break anything adjacent to that one thing?

Most teams have never written this down. The reviewer's job is implicit, and what's implicit becomes a checkbox. Scroll to the end. Leave a comment. Click approve.

When we defined the review standard alongside the PR standard, something shifted. Reviewers knew exactly what they were being asked to do. Engineers knew what they were asking reviewers to evaluate. The implicit negotiation about what "approved" actually means got replaced by a written one, and the written one didn't leave much room for the rubber stamp. The standard created accountability in both directions. The engineer is accountable for the size and scope of what they submit. The reviewer is accountable for what their approval actually means.

We held ourselves to it. Not perfectly. Not without friction. But the friction was the point. The friction meant the standard was real.

Three Things Before the End of the Week

This isn't a platform problem. It doesn't require a new tool or a two-week sprint to implement. It requires a decision and then follow-through.

Write down shallow vertical PR standards in one paragraph. What is a PR allowed to carry? One behavior. One slice. What is the line ceiling? Anchor it to your DORA data if you have it ... lead time for changes will show you where PR sizes are costing you cycle time. If you don't have DORA data yet, start with five hundred lines as a ceiling and adjust from what the data shows you.

Then automate enforcement. Most CI systems can close a PR that exceeds a line count. This is not a complex implementation. It's a policy decision dressed up as a configuration file. The automation makes the policy real in a way a wiki page never will.

Then define what reviewer approval means. One paragraph. What did you actually check? What are you signing off on? When your engineers know what "approved" requires, the approval means something again.

The standard won't be perfect on the first version. Write it anyway. The act of writing it forces the conversation your team hasn't had yet.

The act of writing it forces the conversation your team hasn't had yet.

The Model Will Fill Whatever Space You Give It

AI code generation is not slowing down. The output volume is not going back to what it was three years ago. The teams that figure out how to review AI assisted code well are going to move faster than the teams still treating it like it's a one engineer, one feature world.

The teams that don't figure it out will keep merging rubber stamped diffs and wondering why the codebase is getting harder to work in. Why incidents trace back to changes nobody really reviewed. Why the senior engineers are spending more time firefighting than building.

Leadership's job here isn't to review every PR. It's to define what a reviewable PR looks like. Until you write that down, the model fills the envelope. And your team stamps whatever lands in the queue.

That's a leadership problem. Not an engineering one.